Website Content Scraping: How to Analyze Data Effectively

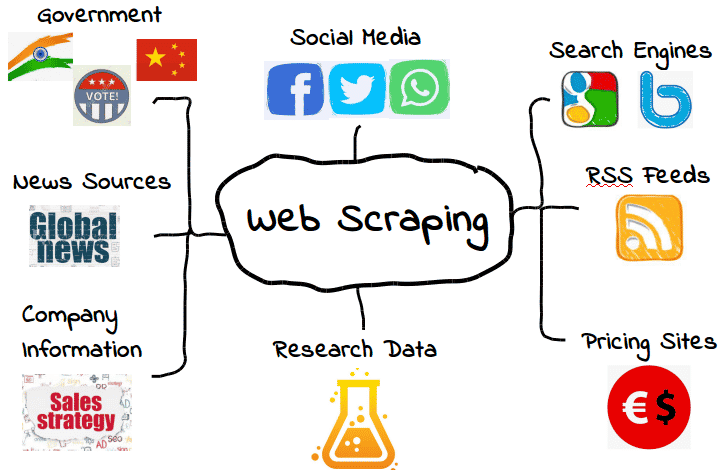

Website content scraping is an essential technique used by individuals and businesses to extract valuable information from various online platforms. This practice, often referred to as web content extraction, enables users to gather data effectively for website analysis or market research. By utilizing data scraping tools, users can automate the process of pulling content from websites, simplifying the task of compiling content summaries and insights. As online content becomes more abundant, the need for efficient scraping websites is steadily increasing, making this skill highly sought after in many industries. Whether it’s for competitive analysis or aggregating information, website content scraping proves to be a powerful resource in today’s digital landscape.

The practice of gathering online information through automated processes, often known as data harvesting or content mining, is crucial in navigating the vast digital ocean. This method of web data extraction allows users to efficiently compile and analyze relevant information from multiple sources, enriching their research or enhancing their business strategies. By leveraging these techniques, users can perform comprehensive studies without the tedious manual labor involved in traditional methods of content gathering. As the internet continues to expand, mastering the art of data collection from diverse websites has become an invaluable asset for marketers and researchers alike. With a focus on innovation, web scraping technologies offer an unprecedented advantage in acquiring actionable insights from online content.

Understanding Website Content Scraping

Website content scraping is a technique used to extract information from web pages. It involves automated tools or scripts that navigate through web content to gather data. This process is crucial for businesses and researchers looking to collect large amounts of information from various sources quickly. By leveraging web scraping, users can compile data for analysis, monitor competitor activity, or aggregate content in one place for further insights.

However, it’s important to distinguish between ethical scraping practices and those that violate a website’s terms of service. Many websites, including major news organizations, have restrictions that prohibit unauthorized scraping. Therefore, practitioners should ensure compliance with legal frameworks and respect copyright laws while conducting web content extraction to avoid potential legal consequences.

Techniques for Effective Web Content Extraction

When embarking on data scraping projects, various techniques can enhance the extraction process. One effective method is the use of web scraping frameworks or libraries like BeautifulSoup or Scrapy, which allow users to structure their scraping efforts efficiently. These tools facilitate the identification of relevant tags and elements within webpages, making it easier to retrieve specific data like headlines, article texts, or images.

In addition to scripts and frameworks, employing regular expressions can significantly streamline the data extraction process. By defining specific patterns, users can capture the precise information needed while avoiding irrelevant content. This results not only in cleaner datasets but also in faster website analysis. Combining these techniques can provide a robust approach to web content extraction that maximizes accuracy and efficiency.

The Importance of Data Scraping for Businesses

For many businesses, data scraping is an invaluable resource that drives strategic decisions. By collecting and analyzing various data points from competitors, market trends, and customer behaviors, companies can make informed decisions to improve their services and products. Data scraping allows for the aggregation of granular data, helping organizations to identify emerging patterns that might go unnoticed in standard data sets.

Furthermore, businesses can utilize scraped data to enhance their content marketing strategies. By understanding what topics are trending or what keywords are being searched, organizations can tailor their content to meet customer needs, optimize SEO, and improve visibility in search engines. This strategic approach leads to targeted marketing efforts that can significantly increase customer engagement and conversion rates.

Website Analysis and Its Benefits

Website analysis is a critical component of maintaining a competitive edge in today’s digital landscape. It involves evaluating various performance metrics such as traffic, user behavior, and engagement levels. By harnessing data scraping techniques, businesses can conduct thorough analyses of both their own websites and those of their competitors, gathering insights that drive improvement.

Moreover, website analysis not only helps in identifying strong points and weaknesses in a web structure but also informs strategies for content development. Understanding which content pieces resonate with audiences allows businesses to refine their editorial strategy, ensuring content aligns with viewer interests while improving overall site performance.

Creating Effective Content Summaries Through Scraping

Content summarization is a fundamental application of data scraping that enables users to distill large pieces of information into digestible formats. By summarizing content from multiple sources, businesses can create concise reports that highlight important information quickly. This ability to synthesize large volumes of data is especially crucial in industries where time-sensitive information affects decision-making.

Tools developed for scraping can be configured to not only extract data but also summarize it based on predefined criteria, making the content summary process efficient. This dual functionality ensures that organizations can keep their stakeholders informed without overwhelming them with extensive reports, thus enhancing operational efficiency.

Challenges in Web Content Extraction

While web content extraction is a powerful tool, it is not without its challenges. Websites often update their layouts, which can disrupt established scraping scripts and tools. This constant change requires ongoing maintenance and adjustments to scraping techniques. Users must be prepared to adapt their solutions to ensure consistency in data retrieval.

Additionally, ethical challenges abound in content scraping, particularly with regard to data privacy and user consent. As data protection regulations like GDPR become increasingly stringent, users of scraping technologies need to navigate these legal landscapes carefully. Striking a balance between obtaining valuable insights and adhering to ethical guidelines is essential to sustain responsible scraping practices.

The Role of Web Scraping in Market Research

Web scraping plays a pivotal role in market research by providing access to vast amounts of data, which can be analyzed to uncover consumer trends and preferences. By aggregating information from various competitors and market sources, businesses can gain insights that inform their strategies and product development. The ability to monitor market signals through scraping allows organizations to remain agile in a rapidly changing landscape.

Moreover, leveraging scraping can yield powerful feedback loops, where businesses adjust their strategies based on real-time data. This dynamic approach enables them to anticipate customer needs and adjust marketing campaigns accordingly. By effectively utilizing data scraping for market research, companies can stay ahead of the curve and tailor their offerings to align with audience expectations.

Choosing the Right Tools for Data Scraping

Selecting the right tools for data scraping is critical to the success of any project. Numerous software options and programming libraries are available, each with its strengths and weaknesses. For beginner scrapers, user-friendly tools like ParseHub and Octoparse offer no-code solutions that simplify the extraction process.

For advanced users, programming libraries like Selenium or Scrapy provide more customizable options that can handle complex scraping tasks. These tools allow for more robust solutions capable of navigating difficult web structures or bypassing anti-scraping measures. Ultimately, the choice of tool should align with the project requirements, skill level, and desired outcomes.

Ethical Considerations in Web Scraping

As the prevalence of data scraping grows, so do the ethical considerations surrounding it. Essential to responsible scraping is understanding the legal implications and ethical boundaries associated with extracting information from websites. Practitioners must be cognizant of the terms of service of the sites they target, as many prohibit scraping.

Additionally, respecting user privacy and data rights is paramount. Businesses should strive for transparency in their scraping practices, ensuring they do not compromise user confidentiality. Addressing these ethical considerations is vital for fostering a trustworthy digital environment where data can be used responsibly.

Frequently Asked Questions

What is website content scraping and how does it work?

Website content scraping refers to the automated process of extracting data from web pages. It works by sending requests to a website, downloading the HTML content, and then parsing the data to retrieve specific information, such as text, images, or links. This is commonly done for web content extraction.

Is it legal to scrape content from websites?

The legality of scraping websites varies depending on the website’s terms of service and the data being extracted. Always review a website’s ‘robots.txt’ file and terms of use to understand what is allowed. Many websites may prohibit web content extraction, especially for commercial use.

What tools are available for data scraping?

There are numerous tools available for data scraping, including popular ones like Beautiful Soup, Scrapy, and Selenium. These tools help automate the website analysis process and simplify the extraction of desired content.

Can I use website scraping to gather data for research purposes?

Yes, data scraping can be utilized for research purposes. However, it’s crucial to ensure that the scraped data complies with legal and ethical standards, including respecting copyright laws and privacy regulations related to web content extraction.

What are the best practices for scraping content from websites?

Best practices for scraping content include respecting the website’s terms of service, implementing proper request rates to avoid server overload, and utilizing user-agent strings. Additionally, ensure you handle extracted data responsibly—especially if it contains sensitive or copyrighted material.

How can website analysis benefit from web scraping?

Website analysis benefits from web scraping by providing insights into competitor strategies, market trends, and customer preferences. Scraping can help automate the collection of relevant data, enabling businesses to make informed decisions based on comprehensive content summaries.

What are common challenges faced during data scraping?

Common challenges in data scraping include dealing with anti-scraping technologies, handling dynamic websites with JavaScript content, and parsing structured data accurately. Understanding HTML and having troubleshooting skills are essential for effective web content extraction.

What are some ethical considerations when conducting content scraping?

When engaging in content scraping, ethical considerations include adhering to website terms of service, not overloading servers with excessive requests, and avoiding the collection of personal data without consent. Always prioritize ethical web scraping practices.

Can I scrape websites that require login credentials?

Yes, it is possible to scrape websites that require login credentials by using tools or libraries that can simulate user logins. However, be mindful of the site’s terms of service, and ensure you have permission to access and extract data after logging in.

What are the advantages of using automated scraping tools for web content extraction?

Automated scraping tools streamline the web content extraction process, allowing for faster data collection and analysis. They help reduce manual effort, improve accuracy in data retrieval, and can be scheduled to scrape data regularly, ensuring up-to-date information.

| Key Point | Description |

|---|---|

| Content Scraping Restrictions | Websites like nytimes.com often have specific policies against scraping their content. |

| User Provided Content | If users provide headlines or text, summarization and analysis can be performed without scraping. |

| Legal and Ethical Considerations | Scraping content without permission may lead to legal issues. |

Summary

Website content scraping involves extracting information from web pages, but caution is necessary due to restrictions placed by many sites, such as the New York Times. To effectively scrape content from a website, users should adhere to ethical and legal standards while ensuring that they only scrape permitted data or utilize text provided directly by users for summarization. This approach not only respects copyright laws but also enhances the ability to tailor the content to specific needs.