Web Scraping Techniques: A Comprehensive Guide

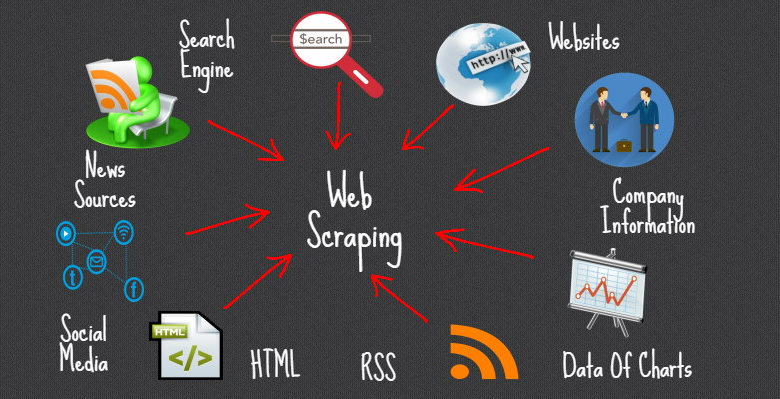

Web scraping techniques have revolutionized the way we interact with the digital landscape, allowing seamless access to vast amounts of data. By employing various data extraction methods, users can automate data collection and enhance their ability to analyze market trends and customer behaviors. These techniques not only simplify the process of scraping content from multiple sources but also ensure efficient extracting information from websites. With the use of advanced web crawler tools, businesses and developers are now empowered to gather and utilize data like never before. Embracing web scraping opens a gateway to valuable insights and strategic opportunities.

In today’s data-driven world, gathering relevant information has become crucial for many organizations. Techniques for harvesting web data and automating data retrieval are essential for efficient data processing. By leveraging sophisticated online data extraction tools, entities can uncover crucial insights that drive decision-making. The ability to programmatically capture content from diverse online platforms ensures that businesses remain competitive and informed. Ultimately, these strategies are not just about collecting data; they enable a deeper understanding of trends and behaviors within the marketplace.

Understanding Web Scraping Techniques

Web scraping techniques involve automated methods of extracting data from websites, enabling users to collect vast amounts of information without the need for manual copying and pasting. These techniques may include various data extraction methods, such as HTML parsing, which involves fetching the website’s HTML and identifying the specific data points desired. Additionally, using web crawler tools can facilitate the process, allowing users to automate data collection on a larger scale.

In the world of web scraping, the ability to efficiently gather and analyze large datasets is crucial for market research, competitive analysis, and more. By implementing effective web scraping techniques, businesses can gain insights from their competitors and adapt their strategies accordingly. Thus, mastering these techniques is not only beneficial but essential for anyone looking to leverage online information.

Scraping Content: Tools and Strategies

When it comes to scraping content from websites, there are numerous tools and strategies available to facilitate the process. Popular web scraping frameworks like Beautiful Soup and Scrapy provide users with the flexibility to develop customized scrapers tailored to their specific needs. With these tools, users can streamline the process of extracting information from websites, enhancing both efficiency and accuracy in data collection.

In addition to using frameworks, adopting the right strategies will amplify the success of web scraping projects. For instance, implementing rate limiting and user-agent rotation can help mimic human browsing behavior, reducing the risk of being blocked by target websites. Furthermore, understanding the legal implications of web scraping and ensuring compliance with a site’s terms of service is essential to avoid potential legal issues.

Automating Data Collection for Business Insights

Automating data collection through web scraping tools is transforming how businesses gather insights from the web. This automation allows companies to update their databases in real-time, providing them with a competitive edge in rapidly changing market conditions. By frequently scraping relevant competitors’ websites, businesses can quickly adapt their offerings, marketing strategies, and pricing structures to meet evolving customer demands.

Moreover, automation in data collection eliminates the tedious manual effort often associated with gathering information. Teams can focus on analyzing scraped data rather than spending valuable time on repetitive tasks. Implementing automated web scraping processes ultimately leads to more informed decision-making and enhanced operational efficiency, setting companies on the path to success in their respective industries.

Extracting Information from Websites: Best Practices

Extracting information from websites successfully involves following best practices that not only ensure accuracy but also compliance with regulations. One of the key best practices is to respect the website’s robots.txt file, which outlines the rules for web crawlers and the parts of the site that can be accessed. By adhering to these guidelines, organizations can avoid potential legal disputes and foster a positive relationship with web administrators.

Additionally, ensuring the reliability of the data collected is crucial. Implementing error-handling mechanisms and validating the extracted information can help maintain the integrity of the datasets. By following these best practices, businesses can maximize the value of web scraping efforts while minimizing risks associated with unauthorized access or data inaccuracies.

The Role of Web Crawler Tools in Data Extraction

Web crawler tools play a pivotal role in data extraction, allowing users to traverse the web autonomously to collect desired data points. These tools can be programmed to follow links, download pages, and extract specific information based on user-defined criteria. By utilizing web crawler tools, organizations can scale their data collection efforts, uncovering actionable insights from even the most extensive digital landscapes.

Moreover, advanced web crawlers are equipped with features that enhance their efficiency, such as multi-threading and intelligent parsing capabilities. These features allow crawlers to extract vast amounts of information quickly and effectively. As businesses increasingly rely on data-driven insights, harnessing the power of web crawler tools to automate and optimize data extraction processes becomes more critical.

Legal Considerations in Web Scraping

As web scraping becomes more prevalent, understanding the legal ramifications is essential for individuals and organizations engaging in data extraction. Many websites have specific terms of service that dictate how data can be used, and violating those terms could result in legal action. Therefore, it is crucial for scrapers to familiarize themselves with these regulations to ensure compliance and avoid potential legal issues.

Additionally, keeping abreast of changes in privacy laws, such as the General Data Protection Regulation (GDPR) in Europe, is vital for ethical web scraping practices. Respecting data protection regulations helps foster trust and credibility while safeguarding user privacy. Legal considerations must, therefore, be integrated into any data scraping strategy to ensure responsible and compliant data usage.

Challenges in Web Scraping and How to Overcome Them

Web scraping presents various challenges that can hinder effective data collection. Common issues include dealing with dynamic content that loads via JavaScript, which standard scraping methods may not efficiently handle. To overcome these obstacles, technical solutions such as using headless browsers or browser automation tools like Selenium can facilitate the scraping of such dynamic websites.

Moreover, websites often employ anti-scraping measures, including CAPTCHAs and IP blocking, to thwart automated data collectors. To navigate these challenges, scraper operators can implement strategies such as rotating IP addresses, utilizing proxy servers, and adapting scraping frequency to mimic human interaction. By strategically addressing these challenges, users can develop robust web scraping solutions that efficiently operate even in restrictive environments.

Ethical Web Scraping Practices

Ethical web scraping practices are essential to maintaining integrity and responsibility while gathering data online. It is important for individuals and organizations to consider the impact of their data collection methods on website owners and users. Scraping data should respect the intellectual property rights of content creators, and obtaining permission when necessary can help foster a more ethical landscape for data extraction.

Furthermore, respecting user privacy and adhering to existing data protection laws are vital components of ethical scraping. Clearly communicating how collected data will be used can also help to build trust among users. By prioritizing ethical considerations, those involved in web scraping can create more sustainable practices that encourage innovation while respecting the rights and interests of others.

Future Trends in Web Scraping

The future of web scraping is poised for significant evolution, driven by advancements in artificial intelligence and machine learning. These technologies enable more intelligent data extraction methods, allowing scrapers to not only collect data but also analyze and interpret it in real-time. This next level of sophistication is set to transform how businesses utilize web-sourced information, turning raw data into valuable insights.

Additionally, as data privacy regulations continue to evolve, web scraping technologies will need to adapt to ensure compliance while still being effective. The future will likely see a greater emphasis on ethical scraping practices and transparent data usage, as businesses recognize the importance of maintaining trust with their users. These trends indicate that web scraping will play an increasingly integral role in data-driven decision-making across various industries.

Frequently Asked Questions

What are the best web scraping techniques for beginners?

For beginners, the best web scraping techniques include using tools like BeautifulSoup and Scrapy, which simplify the process of extracting information from websites. Additionally, understanding HTML structure is crucial as it allows you to effectively target the data you need. Tutorials and online courses are also available to help you get started with these data extraction methods.

How can I automate data collection using web scraping?

To automate data collection, consider using web crawler tools that can navigate websites and perform tasks without manual intervention. Tools like Selenium can simulate user actions, making it easy to scrape content automatically. Additionally, scheduling scripts using cron jobs or Task Scheduler can ensure your data extraction processes run at set intervals.

Are there specific web scraping techniques for handling dynamic websites?

When dealing with dynamic websites, it’s essential to use web scraping techniques like headless browsers (e.g., Puppeteer or Selenium) that can render JavaScript. These tools allow you to scrape content that loads dynamically, ensuring you extract the necessary information effectively.

What legal considerations should I be aware of when scraping content from websites?

When scraping content from websites, you need to be aware of legal considerations such as respecting the website’s robots.txt file, which dictates how content can be accessed. Additionally, ensure compliance with copyright laws and data protection regulations, as unauthorized data extraction can lead to legal issues.

How do web crawler tools improve the efficiency of data extraction?

Web crawler tools enhance the efficiency of data extraction by automating the process of navigation and data collection from multiple web pages. They can be configured to follow links, access specified data points, and output collected information in structured formats, saving time compared to manual scraping methods.

Can I use API endpoints for scraping content instead of traditional scraping techniques?

Yes, using API endpoints for scraping content is often more efficient as APIs provide structured data in a predictable format. This method requires less effort in parsing HTML and is generally more reliable than traditional scraping techniques, especially if the website offers a well-documented API.

What are common challenges faced in web scraping and how can they be overcome?

Common challenges in web scraping include handling CAPTCHAs, dynamic content, and anti-scraping measures. To overcome these, you can use Selenium to bypass CAPTCHAs, leverage proxies to avoid IP banning, and implement delays to mimic human behavior during data extraction.

What tools are recommended for extracting information from websites?

Tools recommended for extracting information from websites include BeautifulSoup and Scrapy for Python users, and tools like Octoparse and ParseHub for non-coders. Each offers unique features tailored to different needs in web scraping and data collection.

| Key Point | Description |

|---|---|

| Restrictions on Access | Web scraping cannot be performed on content from sites like The New York Times due to access limitations and legal constraints. |

| Manual Extraction | Users can manually collect information from web pages, which involves copying text and downloading images. |

| Creating Scrapers | If developing a web scraper, various techniques and tools can be utilized, including libraries like Beautiful Soup and Scrapy for effective scraping. |

| Specific Content Analysis | For analyzing specific content, users should provide the details to receive tailored guidance. |

Summary

Web scraping techniques play a crucial role in collecting data from websites. Although automated scraping is often restricted on certain platforms, understanding how to manually extract information or build your own scrapers can greatly enhance your web data collection efforts. Leveraging powerful libraries and tools can streamline the process, making it easier to gather valuable data for analysis and research.