Web Scraping Tips: Essential Techniques for Beginners

Web scraping tips are essential for anyone looking to efficiently gather and analyze information from the internet. By employing effective data extraction methods, you can navigate through complex websites and pull out relevant data with ease. Utilizing HTML parsing techniques can significantly improve the accuracy of your results, allowing you to extract structured information quickly. When combined with the right web scraping tools, these methods enable you to analyze web text efficiently, turning raw data into valuable insights. Whether you are extracting product details, market trends, or user reviews, mastering these web scraping tips will enhance your data-gathering capabilities.

In the realm of digital data collection, strategies for harvesting information from websites are paramount. These techniques, often referred to as web data aggregation methods, encompass the use of various tools that facilitate content extraction and text analysis. By implementing robust protocols for parsing website structures, individuals can uncover meaningful insights hidden within vast amounts of online information. Understanding the nuances of this process is crucial for effective content scraping and analysis. As the demand for data-driven decisions increases, honing these skills will make you an asset in any analytical endeavor.

Understanding Web Scraping Basics

Web scraping is the automated method of extracting information from websites, which can be highly beneficial for various applications such as data analysis, market research, and even academic purposes. By utilizing tools and techniques, you can scrape web content efficiently, allowing you to gather large sets of data without manual effort. It’s essential to understand the legal implications and ethical considerations of web scraping to ensure compliance with a site’s terms of service.

When beginning web scraping, familiarity with HTML parsing techniques is crucial. Websites are built using HTML, and understanding how to navigate this structure is key to successful data extraction. Consider using libraries like Beautiful Soup or Scrapy, which simplify the process of scraping web content by providing methods for selecting HTML elements and extracting the necessary data. This foundational knowledge allows you to move towards more complex scraping tasks.

Essential Web Scraping Tools and Technologies

Various web scraping tools are available that can enhance the scraping process and make data extraction more efficient. Some popular choices include Scrapy, Octoparse, and ParseHub, each offering unique features for different scraping needs. Selecting the right tool depends on your specific requirements, such as the complexity of the target website, the volume of data you wish to extract, and your level of coding proficiency.

In addition to tools, mastering some programming languages can significantly improve your web scraping capabilities. Python is particularly favored for its straightforward syntax and powerful libraries like Requests and Beautiful Soup. By integrating these tools and coding techniques, you can automate the data extraction process, reducing the time and effort involved in analyzing web text.

Best Practices for Data Extraction Methods

When implementing data extraction methods, adhering to best practices ensures the quality and reliability of the scraped data. Start by inspecting the website’s structure and identifying the required elements for scraping. Utilizing correct selectors during the scraping process will yield accurate results. Additionally, incorporating methods to handle rate limiting and detecting CAPTCHAs can prevent your IP address from being blocked.

Another best practice is to implement data cleaning techniques post-extraction. Raw data from web scraping can often include duplicates, irrelevant information, or formatting inconsistencies. Using libraries such as Pandas allows you to analyze and manipulate the scraped data efficiently, leading to cleaner datasets that are ready for analysis and visualization. This attention to detail can profoundly impact the insights you derive from your scraped data.

Common Challenges in Web Scraping and Solutions

Web scraping can come with many challenges, such as dealing with dynamic content that loads via JavaScript. Many websites use frameworks that render content dynamically, making it difficult to scrape using traditional methods. To tackle this, consider utilizing tools like Selenium or Puppeteer, which mimic browser behaviors, allowing you to extract data from sites that require JavaScript rendering.

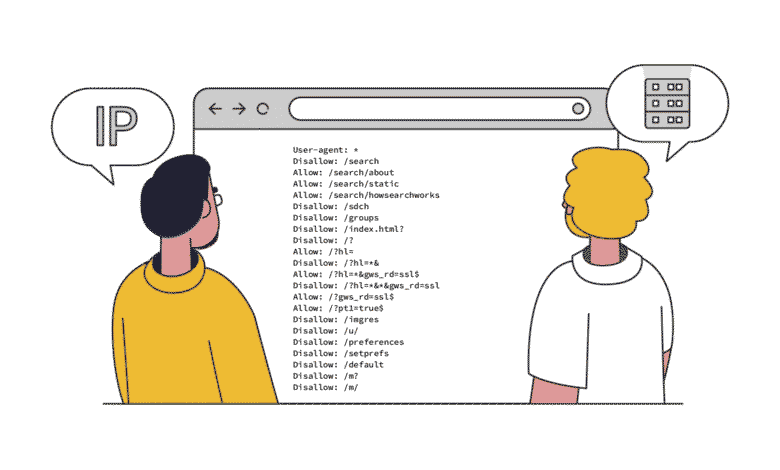

Another challenge involves managing anti-scraping measures implemented by websites. Techniques such as frequent IP blocking, CAPTCHAs, and user-agent validation can disrupt your scraping activities. Solutions like rotating proxies, respecting robots.txt files, and implementing delays between requests can help to navigate these obstacles successfully, allowing for a smoother scraping experience.

Advanced HTML Parsing Techniques

To effectively scrape content from websites, mastering advanced HTML parsing techniques is vital. These techniques can significantly enhance your scraping efficiency and accuracy. For example, XPath and CSS selectors are powerful methods for navigating complex HTML structures, allowing you to target specific elements on a page with precision. Utilizing these methods in conjunction with scraping libraries enables you to automate data extraction in a highly efficient manner.

Additionally, understanding how to handle various data formats, such as JSON and XML that are frequently returned by modern web APIs, boosts your web scraping capabilities. This versatility is key in scenarios where data isn’t easily accessible from HTML alone. By leveraging both HTML parsing techniques and API interactions, you can unlock a wealth of information that is otherwise hidden.

Key Considerations for Ethical Web Scraping

As web scraping continues to grow in popularity, ethical considerations are paramount. It is essential to respect the terms of service of the website you are scraping and to check if scraping is allowed. Some sites explicitly prohibit automated data extraction, and failing to adhere to these restrictions can result in legal repercussions. Always analyze these terms before initiating any scraping activities to ensure compliance.

Furthermore, it’s crucial to consider the impact of your scraping activities on the target website. Excessive scraping can cause server strain and slow down website performance, negatively affecting user experience. To mitigate this, implement polite scraping practices such as pacing requests, using proper headers, and targeting off-peak hours for scraping. This mindfulness can not only protect the website but also the integrity of the scraping practice.

Improving Data Quality Through Analysis

Once you have extracted the data through web scraping, the next step is to analyze it to derive meaningful insights. Thorough analysis involves examining the scraped text for patterns, trends, and anomalies that can inform decision-making. This process often includes statistical analysis and using visualization tools to represent the data clearly, making it easier to interpret.

Additionally, quality control measures should be implemented to assess the accuracy of the data collected. Comparing the scraped data against known benchmarks or running validation tests can help identify discrepancies or errors. By prioritizing data quality through rigorous analysis, you ensure that the insights gained from your scraping activities are robust and actionable.

Utilizing Web Scraping for Market Research

Web scraping is a powerful tool for conducting comprehensive market research. By gathering data from competitors’ websites, social media, and review platforms, businesses can gain valuable insights into customer preferences, market trends, and pricing strategies. This information can be pivotal in shaping business decisions and marketing campaigns.

Moreover, automating the process of collecting this data ensures that market insights are always up-to-date. With a well-structured scraping operation, companies can continuously monitor competitor offerings and adjust their strategies accordingly. This agile approach to market research ultimately leads to more informed decision-making and a competitive edge in the market.

The Future of Web Scraping Technologies

As technology evolves, so does the landscape of web scraping. Emerging technologies like artificial intelligence and machine learning are starting to influence how data is extracted and processed. These advancements will facilitate more sophisticated scraping techniques, allowing for the extraction of contextual information and increasing the efficiency of data collection.

Additionally, the rise of no-code web scraping platforms indicates a trend toward making these technologies accessible to non-programmers. These platforms empower a broader audience to engage in data extraction and analysis, ultimately democratizing data access. The future of web scraping promises enhanced capabilities that cater to a variety of users, from researchers to business professionals.

Frequently Asked Questions

What are some effective web scraping tips for beginners?

For beginners in web scraping, it’s crucial to start with the right web scraping tools that suit your needs. Familiarize yourself with data extraction methods tailored for specific sites, and practice HTML parsing techniques to retrieve data accurately. Always respect website robots.txt to avoid legal issues.

How can I improve my data extraction methods in web scraping?

To enhance your data extraction methods, utilize libraries and frameworks specifically designed for web scraping. Tools like BeautifulSoup for Python can streamline HTML parsing, and learning to analyze web text effectively will help you target the information you need with precision.

What are the best web scraping tools available?

The best web scraping tools include Scrapy, BeautifulSoup, and Selenium. These tools offer powerful features for scraping web content, enabling efficient HTML parsing techniques and robust data extraction methods to meet various scraping challenges.

How can I analyze web text effectively during web scraping?

Effective web text analysis can be achieved by utilizing natural language processing (NLP) tools alongside scraping techniques. Once you’ve scraped web content, apply NLP techniques to extract insights and sentiments from the text, enhancing your data quality.

What legal considerations should I keep in mind while scraping web content?

When scraping web content, always check the site’s terms of service and respect their robots.txt file. Ensure your data extraction methods comply with legal standards to avoid potential issues, and remember that some websites prohibit scraping entirely.

Is HTML parsing necessary for web scraping?

Yes, HTML parsing is a fundamental aspect of web scraping. It allows you to extract meaningful data from the structure of web pages. By leveraging parsing libraries, you can efficiently navigate and manipulate HTML to obtain the data you need.

Can I scrape web content without coding knowledge?

Yes, there are user-friendly web scraping tools available that do not require coding skills. These tools typically provide a visual interface that allows users to scrape web content, perform HTML parsing, and apply simple data extraction methods easily.

What are some challenges I might face while scraping web content?

Common challenges in scraping web content include dealing with dynamic content, CAPTCHAs, and site changes that affect your scraping scripts. It’s important to stay updated on the sites you scrape and adapt your approach using robust HTML parsing techniques.

How can I ensure my web scraping project is efficient?

To ensure efficiency in your web scraping project, plan your data extraction methods carefully. Use multithreading or asynchronous requests to speed up the scraping process, and establish proper error handling in your scripts to manage unexpected issues.

What resources are available for learning effective web scraping techniques?

Numerous online resources are available for learning web scraping techniques, including tutorials, workshops, and forums. Websites like GitHub and Stack Overflow can provide valuable insights into using various scraping tools and HTML parsing techniques.

| Key Point | Description |

|---|---|

| Limitations of Web Scraping | Web scraping can’t access content behind paywalls or login forms. |

| Understanding HTML Structure | Familiarize yourself with HTML tags to effectively locate elements to scrape. |

| Tools and Libraries | Utilize libraries like Beautiful Soup and Scrapy for efficient scraping. |

| Respecting Robots.txt | Check the website’s robots.txt file to understand what content you are allowed to scrape. |

| Data Storage | Plan how to store the scraped data, e.g. CSV, database. |

Summary

When it comes to web scraping tips, understanding the limitations and structure of the target website is crucial. Make sure to familiarize yourself with the HTML structure to effectively scrape the content you need. Additionally, using powerful tools and libraries will enhance your scraping efficiency, while also being respectful of the website’s scraping policies as indicated in their robots.txt file. Lastly, consider how you will store the data once it is scraped, ensuring that you choose a method that suits your needs.