Scraping Content from NYTimes: How to Extract Information

Scraping content from the New York Times has become an intriguing topic for many looking to tap into reliable news sources. With the emergence of various web scraping tools, enthusiasts and professionals alike can easily extract valuable information from websites. Understanding how to scrape websites effectively allows users to gather the latest articles, trends, and insights effortlessly. By utilizing specific scrape HTML content methods, one can automate the process of gathering data, saving both time and effort. This innovative approach not only enhances content discovery but also fuels analytical projects across multiple domains.

When discussing the extraction of digital information from popular online publications, one can refer to it as ‘content harvesting’ or ‘data mining’. By leveraging advanced techniques and tools designed for the internet, users can efficiently gather news pieces from major outlets. This practice often involves knowledge of digital scraping algorithms and methodologies that simplify the user experience while maintaining ethical standards. The ability to tap into vast reservoirs of information equips researchers, developers, and marketers with the necessary data to stay ahead in their fields. Whether one is seeking to enhance their data collection process or simply stay informed, mastering the art of digital content extraction is invaluable.

Understanding Web Scraping

Web scraping is a technique used to extract information from websites using software tools. This process involves fetching web pages and parsing the data contained within them. The main objective is to gather specific data efficiently while avoiding the manual effort of going through each web page. To implement web scraping effectively, it is essential to understand the structure of HTML content, as it is the backbone of web pages. By mastering HTML and its elements, developers can customize their scraping methods for better accuracy and efficiency.

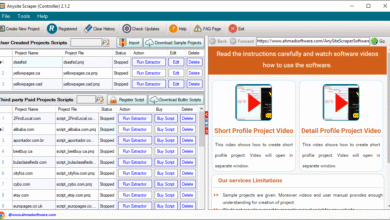

There are various web scraping tools available today, catering to different expertise levels. For beginners, there are user-friendly tools like Beautiful Soup and Scrapy, which simplify the process of extracting information from websites. For advanced users, programming languages like Python and JavaScript, combined with libraries specific for web scraping, allow for more complex and customized data extraction processes. Understanding how to scrape websites effectively can unlock a wealth of data for analysis, competitive intelligence, and market research.

Key Methods for Scraping HTML Content

When it comes to scraping HTML content, there are several methods developers can utilize, depending on their requirements and the complexity of the target website. A common approach is to use a library like Beautiful Soup in Python that allows users to parse HTML and extract data easily. This method is highly effective for static webpages where the content does not change frequently.

For dynamic websites that load content via JavaScript, tools such as Puppeteer or Selenium can be used. These allow for the scraping of websites that require user interaction or data that loads after the initial page load. Additionally, employing APIs provided by websites can streamline the scraping process, as they offer structured access to the data without the need for complicated scraping methods.

Scraping Content from NYTimes Effectively

While I cannot access external websites directly, scraping content from reputable news sources like the New York Times requires careful consideration of their terms of service and the ethical implications involved. With proper methods, users can extract headlines, articles, and other relevant information. To accomplish this, developers typically automate the extraction process, ensuring that their tools can navigate through the website’s structure effectively. Understanding how to scrape websites like NYTimes, while respecting copyright laws, is crucial.

Using tools like Scrapy can help streamline the process of scraping NYTimes content. This tool allows developers to set up crawlers that can follow links and gather specific data points, such as article titles, authors, and publication dates. Always remember to assess the site’s robots.txt file to identify the allowed scraping practices and limits.

Best Practices for Ethical Web Scraping

When engaging in web scraping, it’s vital to practice ethical scraping to maintain good relationships with website owners and comply with legal standards. Always check a website’s terms of service and robots.txt file to determine if they permit scraping. By adhering to their guidelines, you can avoid potential legal pitfalls and ensure your scraping efforts are both respectful and compliant.

Another crucial best practice is to implement delays in your scraping scripts to avoid overwhelming a server with multiple requests in a short period. This not only prevents your IP from being banned but also reduces the strain on the website you are scraping. Furthermore, consider using user-agent strings to mimic a browser request, which can help you bypass some restrictions while scraping.

The Role of APIs in Data Extraction

Application Programming Interfaces (APIs) play a significant role in data extraction and can simplify the web scraping process. Many websites, including news outlets and databases, offer APIs that allow users to request specific data in a structured format. This is often preferable to scraping because it provides a straightforward and legally compliant method of accessing data.

By using APIs, developers can avoid the complexities involved in scraping HTML content and reduce the risk of being blocked by the website. Moreover, APIs typically return data in formats like JSON or XML, which are easier to parse compared to raw HTML. Therefore, utilizing APIs can save both time and resources for those looking to extract information quickly and efficiently.

The Future of Web Scraping Technologies

The future of web scraping technologies is anticipated to be influenced significantly by advancements in artificial intelligence and machine learning. These technologies are expected to enhance the ability of scraping tools to analyze and interpret the data collected from websites more effectively. As these tools evolve, users may benefit from automated systems that can adapt to changes in website structures or content layout without manual adjustments.

Furthermore, with the increasing regulation of data privacy and security, it is essential for web scraping technologies to integrate compliance measures. Businesses that leverage data scraping will need to evolve their practices, ensuring that they respect user privacy while still gaining insights from the gathered data. This will shape the strategies used in web scraping moving forward and ensure sustainability in the practice.

Common Challenges in Web Scraping

Web scraping, while a powerful technique, is not without its challenges. One of the major hurdles is dealing with websites that implement anti-scraping measures such as CAPTCHAs or dynamic content loading. These measures are designed to prevent bots from accessing their data, creating obstacles for developers who seek to automate content extraction. Navigating these challenges may require advanced techniques like headless browsing or the use of IP rotation.

Additionally, website structures can change frequently, which can break scraping scripts and require continuous maintenance and updates. This variability poses a challenge for long-term scraping projects, as it demands a commitment to regularly checking and updating the scripts to ensure they function correctly. Developers must be prepared to tackle these issues to maintain effective scraping operations.

Legal Considerations in Data Scraping

Understanding the legal ramifications of web scraping is essential for developers and organizations involved in data extraction. Laws surrounding web scraping can vary significantly between jurisdictions, making it crucial to be informed about both local and international regulations. Cases have emerged in courts over the legality of scraping, with outcomes often depending on whether the scraped data is publicly accessible or restricted.

In addition, respecting copyright laws is fundamental when scraping content from websites. Using scraped data for commercial purposes without permission can lead to severe legal consequences. As such, it is advisable to seek permission or consult with legal professionals before proceeding with scraping, especially if there is a plan to monetize the extracted data.

The Impact of AI on Web Scraping

Artificial Intelligence (AI) is transforming the web scraping landscape by introducing smarter algorithms that can learn from data patterns. Machine learning models can be trained to identify trends and anomalies in scraped data, enhancing the quality of insights extracted from various sources. This AI-driven approach allows for more intelligent parsing of complex HTML content and helps in automating the extraction process more effectively.

Moreover, AI tools can significantly reduce the manual coding required for scraping, as they can adapt to changes in website designs or content structures autonomously. This innovation is paving the way for a future where web scraping becomes more efficient and user-friendly, eliminating many traditional challenges associated with data collection from websites.

Frequently Asked Questions

What is web scraping and how does it apply to scraping content from the New York Times?

Web scraping is the process of extracting information from websites. When it comes to scraping content from the New York Times, it involves using web scraping tools to gather data from its web pages, such as articles and headlines, in a structured format.

Are there any legal considerations when scraping content from the New York Times?

Yes, when scraping content from the New York Times, it’s important to consider copyright laws and the site’s terms of service. Unauthorized scraping can lead to legal issues, so always ensure you comply with these regulations.

What are the best web scraping tools for extracting information from websites like the New York Times?

Some of the best web scraping tools for extracting information from websites include Beautiful Soup, Scrapy, and Selenium. These tools can help you efficiently scrape HTML content from the New York Times and other sites.

Can you provide a simple method on how to scrape HTML content from the New York Times?

A simple method to scrape HTML content from the New York Times involves using Python with libraries like Beautiful Soup and requests. First, send a request to the webpage, then parse the HTML with Beautiful Soup to extract the desired information.

How can I ensure my scraping does not violate the New York Times’ terms of service?

To ensure your scraping activities do not violate the New York Times’ terms of service, review their policies regarding data use and scraping. Use their API if available, and avoid excessive requests that may flag your activity as abusive.

What types of content can I scrape from the New York Times?

You can scrape various types of content from the New York Times, including articles, headlines, images, and metadata. However, be cautious about the legal implications and ensure you are respecting copyright regulations.

What is the process of extracting information from websites like the New York Times using code?

The process involves using a programming language like Python to write a script. You would send an HTTP request to the New York Times, retrieve the HTML response, and then parse that HTML to extract specific information using web scraping libraries.

Is it possible to scrape real-time data from the New York Times?

Yes, it is possible to scrape real-time data from the New York Times by setting up a web scraping script that runs periodically to collect the latest information. However, ensure that this does not violate their scraping policies.

What are the technical challenges of scraping content from the New York Times?

Technical challenges of scraping content from the New York Times may include dealing with dynamic content, anti-scraping measures, and the need to manage IP blocking or captchas. Using advanced scraping techniques can help overcome these challenges.

How can I learn more about web scraping and its applications to sites like the New York Times?

To learn more about web scraping, consider online courses, tutorials, and documentation for web scraping tools like Scrapy and Beautiful Soup. Joining forums or communities focused on web scraping can also enhance your knowledge and skills.

| Key Point | Explanation |

|---|---|

| Access Limitations | The inability to access external websites like nytimes.com to scrape content. |

| Assistance with HTML | Can help extract information if specific HTML content or structure is provided. |

Summary

Scraping content from nytimes is a complex task due to access limitations imposed by external websites. While I can provide assistance in dealing with HTML structures or extracting data from provided content, I cannot directly scrape from sites like nytimes.com. For those looking to scrape from nytimes, it is crucial to understand the legal and ethical implications, as well as technical barriers that might limit access.