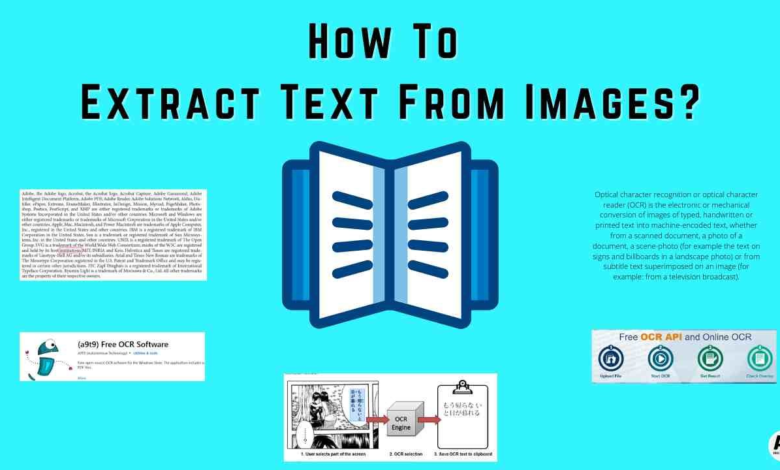

Text Extraction: Techniques for Efficient Data Retrieval

Text extraction is a crucial process that allows us to convert raw data into usable information, particularly in today’s data-driven world. It encompasses a variety of techniques, such as HTML content extraction and automated data extraction, to gather relevant data from various sources seamlessly. By employing advanced web scraping tools, businesses can streamline their information retrieval processes, making it easier to analyze data from the web effectively. This not only saves time but also ensures that the extracted content is accurate and reliable. As we delve deeper into the world of data extraction techniques, it’s essential to understand its significance in optimizing workflows across industries.

When we discuss the extraction of textual data, we often refer to it with terms like information mining or content retrieval. This process is essential for organizations looking to harness valuable insights from large datasets, allowing them to make data-driven decisions. Utilizing modern technologies, businesses can automate their content collection efforts, ensuring they keep up with the ever-growing digital landscape. By leveraging these advanced methodologies, companies can effectively tap into the wealth of information available online, enhancing their analytical capabilities. Ultimately, mastering these techniques not only improves efficiency but also elevates strategic planning and operational success.

Understanding HTML Content Extraction

HTML content extraction is a fundamental aspect of web data collection. This process involves retrieving specific data elements from web pages by parsing the HTML structure. The extracted data can be anything from text and images to links and metadata. By utilizing web scraping tools, users can automate the extraction process, making data retrieval efficient and scalable. Understanding the nuances of HTML structure is crucial because it allows scrapers to accurately target the elements they need.

When it comes to HTML content extraction, various techniques can be employed, such as XPath, CSS selectors, and regular expressions. Each of these methods offers unique advantages depending on the complexity of the web pages being scraped. Moreover, incorporating libraries like BeautifulSoup for Python or Cheerio for JavaScript can streamline the process and enhance accuracy. Ultimately, mastering these skills opens new avenues for leveraging data from the web.

The Role of Data Extraction Techniques

Data extraction techniques are pivotal for transforming unstructured data found on web pages into structured and usable formats. This process can vary widely based on the source of the data and the type of information required. For instance, in cases where information retrieval is crucial, using automated data extraction methods can save significant time and effort. Techniques such as web crawling, parsing, and machine learning algorithms come into play to facilitate the collection and organization of data.

Moreover, there are several approaches to consider when implementing data extraction techniques. These can range from manual extraction methods, which may be time-consuming, to automated scripts that can run at scheduled intervals. Choosing the right technique depends heavily on the project’s scale and the required accuracy of the extracted data. An understanding of these methodologies will greatly benefit individuals looking to streamline their data collection efforts.

Exploring Web Scraping Tools

Web scraping tools are essential for executing effective data extraction from websites. These tools vary in complexity from simple browser extensions, like Web Scraper or Data Miner, to more sophisticated programming libraries such as Scrapy and Selenium. Each tool offers different functionalities, catering to users with varying levels of expertise. For example, Scrapy is powerful for complex scraping tasks, while simpler tools may suffice for one-off extractions.

Choosing the right web scraping tool can dramatically affect the success of an extraction project. Factors such as ease of use, speed, and the ability to handle JavaScript-heavy sites are critical in the decision-making process. Additionally, understanding the legality of web scraping and adhering to a website’s terms of service is vital to avoid potential issues. With the right tools in hand, users can efficiently gather data that drives informed decision-making and insights in their respective fields.

Information Retrieval Techniques in Data Extraction

Information retrieval techniques complement data extraction by enhancing the quality of the collected information. These techniques focus on obtaining relevant data from large datasets and ensuring that the extracted data meets user needs. Utilizing methods such as keyword searches, query expansion, and relevance ranking can improve the effectiveness of data extraction processes, allowing for better data analysis and insight generation.

Implementing effective information retrieval techniques involves utilizing algorithms that can suss out the most relevant data entries from the extracted results. Techniques such as natural language processing (NLP) can further refine the results, making them more aligned with the end-user’s queries. As data continues to expand, mastering these techniques will become increasingly vital for optimizing the data extraction landscape.

Automated Data Extraction Solutions

Automated data extraction solutions are designed to streamline the process of gathering and processing information from various online sources. These solutions leverage technologies such as Artificial Intelligence and machine learning to create more precise and efficient extraction methods. Automated systems not only save time but also reduce human error, leading to high-quality data that can be used for deep analysis and reporting.

Furthermore, automated data extraction tools can handle large volumes of data with ease, making them ideal for businesses that rely on real-time data for decision-making. As businesses grow and data needs evolve, integrating automated solutions becomes crucial for staying competitive. By adopting these technologies, organizations can ensure they are well-equipped to manage and utilize the vast amounts of data available on the web.

Best Practices in HTML Content Extraction

Implementing best practices in HTML content extraction is essential for maximizing efficiency and accuracy. One critical practice is to ensure that the scraping is conducted ethically, adhering to the website’s robots.txt file to respect the site’s policies. Additionally, structuring code properly and utilizing reliable libraries will enhance the scraping process and prevent downtime due to potential errors in the parsing logic.

Another important aspect is to monitor the changes in HTML structures of target websites. Many websites regularly update their layouts, which could break your scraping scripts if not adjusted accordingly. Regular checks and updates to your extraction methodology will ensure ongoing success in your data collection efforts, allowing you to maintain a reliable flow of information.

Advanced Techniques for Data Extraction

As the digital landscape evolves, advanced data extraction techniques have emerged to tackle more complex challenges. Techniques such as dynamic web scraping, which can handle websites built with technologies like AJAX or React, enable scrapers to extract data from pages that do not serve static HTML. This capability is essential for navigating modern web applications where data changes dynamically based on user interactions.

Implementing advanced techniques such as machine learning for predictive scraping can also optimize data retrieval efficiencies. By training models to identify patterns and relevant data points, users can automate the decision-making process regarding what data to extract. Consequently, harnessing advanced data extraction techniques can significantly enhance the quality and scope of data gathered from diverse online sources.

Challenges in HTML Content Extraction

Despite its advantages, HTML content extraction is not without challenges. One significant hurdle is handling websites that deploy counter-scraping measures like CAPTCHAs or IP blocking to prevent automated access. These defenses can interfere with the scraping process, requiring users to find creative solutions to bypass such interruptions while still respecting the website’s terms of service.

Additionally, the inconsistency of HTML structures across different sites can pose practical challenges. Variability in how data is marked up means that a one-size-fits-all approach is often ineffective. Therefore, it’s imperative for users to tailor their extraction methods for each target website to ensure accurate and comprehensive data retrieval.

The Future of Data Extraction Techniques

The future of data extraction techniques is bright and filled with innovation as technology continues to advance. With the rise of Artificial Intelligence and Natural Language Processing, predicting optimal data extraction methods will become easier. These advancements promise smarter scraping tools that can learn from previous extractions and adapt to changes in data formats and structures over time.

Furthermore, as data privacy regulations become more stringent, future data extraction techniques will need to incorporate enhanced compliance measures. This will likely include features that respect user consent and ensure privacy is maintained while still achieving comprehensive data gathering. As the landscape shifts, staying informed and adaptable will be crucial for those engaged in data extraction activities.

Frequently Asked Questions

What is text extraction in the context of data extraction techniques?

Text extraction refers to the process of retrieving specific information from documents or web pages, utilizing various data extraction techniques to sift through large volumes of content effectively.

How do web scraping tools facilitate automated data extraction?

Web scraping tools are designed to automate the data extraction process, enabling users to gather structured information from websites, including HTML content extraction, without manual intervention.

What are some common use cases for information retrieval through text extraction?

Information retrieval through text extraction is commonly used in data analysis, academic research, and competitive intelligence, allowing organizations to convert unstructured data into actionable insights.

Can I use HTML content extraction for large-scale data projects?

Yes, HTML content extraction is advantageous for large-scale data projects, as it enables the extraction of vast amounts of information from multiple web pages simultaneously, streamlining the data gathering process.

What is the difference between manual extraction and automated data extraction?

Manual extraction involves human effort to sift through content to find the necessary information, while automated data extraction employs tools and scripts to expedite this process, enhancing efficiency and accuracy.

What challenges might I face when using web scraping tools for text extraction?

Challenges in using web scraping tools for text extraction include dealing with CAPTCHAs, website structure changes, and legal concerns regarding web scraping practices.

How can I optimize my text extraction process for better performance?

To optimize your text extraction process, utilize efficient web scraping tools, ensure your scripts are well-designed, and consider using data cleansing techniques on the extracted information for improved performance.

What role does LSI play in effective text extraction?

Latent Semantic Indexing (LSI) enhances text extraction by identifying related terms and concepts within the content, which can improve the relevance and accuracy of the information retrieved.

Are there specific data extraction techniques tailored for different types of content?

Yes, various data extraction techniques can be tailored for different types of content, such as structured data extraction for tables and semi-structured data extraction for web pages with consistent formatting.

How can I ensure compliance with legal guidelines when using automated data extraction?

To ensure compliance when using automated data extraction, review the website’s terms of service, avoid scraping sensitive information, and consider adhering to robots.txt files to respect the site’s scraping policies.

| Key Points | Details |

|---|---|

| Restriction on Internet Access | The model cannot browse external websites like nytimes.com. |

| Text Extraction Capability | Users must provide specific HTML content or text for analysis. |

| Assistance Offered | The model can help analyze or extract information from user-provided text. |

Summary

Text extraction is a crucial capability available through this service. Unfortunately, I cannot directly access external websites, including nytimes.com, to perform such tasks. However, I can help you analyze and extract information if you provide me with the necessary HTML content or text. This approach ensures precise and effective handling of the requested data.