Site Scraping: Effective Methods for Data Extraction

Site scraping is an essential technique for gathering data from various web pages, enabling users to extract valuable information with ease. By leveraging this method, individuals and businesses can efficiently obtain data for analysis, insights, or competitive advantage. Understanding the intricacies of web scraping, including automated scraping and HTML parsing, is crucial for effective data extraction. With a variety of content scraping tools available, users can streamline the process and achieve remarkable efficiency. As digital content continues to proliferate, site scraping has become increasingly relevant for those looking to harness the power of online information.

Many refer to site scraping as web harvesting or content aggregation, where the primary goal is to collect and analyze information found on the internet. This practice involves utilizing techniques that automate the retrieval of data from web pages, improving workflows for researchers and businesses alike. Through advanced HTML parsing and specialized scraping software, users can access a wealth of information, transforming unstructured data into usable formats. Embracing these approaches not only enhances data accessibility but also empowers decision-making processes across various industries. As we explore the fundamentals of this practice, it becomes clear that mastering these tools is essential in today’s data-driven landscape.

Understanding Site Scraping Techniques

Site scraping, also known as web scraping, is the process of extracting data from websites. This technique is increasingly used by businesses and researchers to gather information that can inform decision-making and strategy. By implementing various web scraping tools, users can automate the extraction of large quantities of data, which would be impractical to collect manually. Understanding different scraping methods can empower users to select the most effective approach for their specific needs.

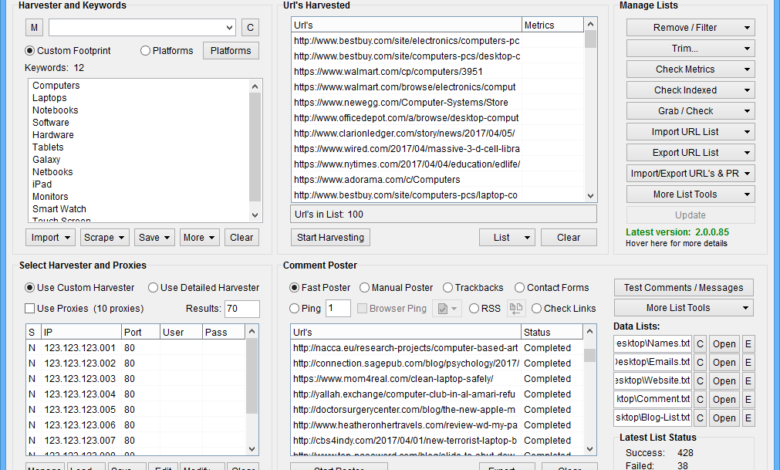

Automated scraping has gained popularity due to its efficiency and time-saving capabilities. By utilizing content scraping tools, users can schedule regular scrapes, ensuring that they consistently receive updated data without continual manual intervention. This not only enhances productivity but also enables organizations to focus on analyzing the scraped data rather than spending countless hours on extraction.

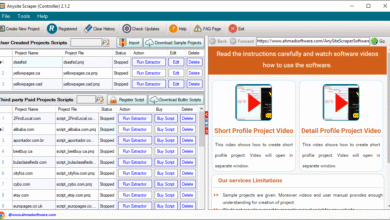

Exploring Content Scraping Tools

Content scraping tools allow users to extract and collect data from various online sources seamlessly. These tools often come equipped with features such as HTML parsing, which enables them to navigate the complex structure of web pages and extract relevant information accurately. As a result, these scraping tools can handle diverse content types, whether it involves text, images, or other multimedia elements.

Choosing the right content scraping tool is crucial for successful data extraction. A good tool not only streamlines the scraping process but also provides options for scheduling scrapes, handling dynamic content, and managing data storage. With the right tool, users can gather insights from their favorite websites and leverage the information in a way that benefits their business or research initiatives.

The Importance of Data Extraction

Data extraction is central to any web scraping project, serving as the foundation upon which meaningful analysis is built. Accurately gathering information from websites enables companies to track trends, monitor competitors, and discover customer preferences. Additionally, effective data extraction allows businesses to obtain insights that influence product development, marketing strategies, and overall operational decisions.

Employing advanced data extraction techniques ensures that the information retrieved is both relevant and comprehensive. By focusing on precision during the scraping process, organizations can avoid the pitfalls of misinformation or incomplete data. This can enhance the accuracy of their analyses, leading to better-informed choices and strategies moving forward.

HTML Parsing in Web Scraping

HTML parsing plays a critical role in the web scraping process, as it enables the extraction of data from the complex frameworks of web pages. Understanding how to effectively parse HTML allows scrapers to pinpoint specific elements like headings, paragraphs, tables, and images. This is essential for ensuring that the scraped data is structured and usable for analysis.

Furthermore, effective HTML parsing can enhance the quality of the scraped content, resulting in data that is not only accurate but also coherent. By employing libraries and tools specifically designed for HTML parsing, developers can craft more sophisticated scraping solutions that can handle a variety of content types and website structures.

Ethics and Best Practices in Site Scraping

When engaging with site scraping practices, it’s crucial to adhere to ethical standards and best practices. Respecting a website’s terms of service and robots.txt file is essential to maintain credibility and avoid potential legal consequences. Ethical scraping ensures that the scraped data is collected responsibly, thus fostering a positive relationship between scrapers and website owners.

Incorporating best practices into web scraping not only mitigates risks but also enhances the quality of collected data. Using proper throttling and delay techniques reduces server load and prevents sites from feeling overwhelmed by requests. Being transparent about data use and giving credit where it’s due can further reinforce ethical scraping practices.

Real-World Applications of Automated Scraping

Automated scraping is revolutionizing how businesses operate by providing timely data across various industries. For example, in the e-commerce sector, companies leverage automated scraping to monitor competitor pricing, stock levels, and customer reviews. This real-time data informs pricing strategies and inventory management, allowing businesses to remain competitive and responsive to market changes.

Moreover, industries such as finance and real estate rely significantly on automated scraping for collecting market data and trends. By automating the data extraction process, analysts can quickly compile extensive databases that aid in forecasting, investment decisions, or identifying potential property acquisition opportunities. This kind of intelligence is invaluable for enhancing strategic planning and operational efficiency.

Technical Challenges in Web Scraping

As web scraping technology evolves, so do the challenges associated with it. Issues such as website changes, anti-scraping measures, and CAPTCHAs can hinder the scraping process. When websites frequently change their layouts or structures, scrapers must be adjusted accordingly to ensure continued data extraction. This can add to the time and resources required to maintain scraping operations.

Furthermore, websites are increasingly implementing anti-scraping mechanisms that identify and block scraping activities. Solutions such as rotating IP addresses and using headless browsers can help bypass these limitations but may require more advanced technical knowledge to implement effectively. Understanding these challenges is essential for anyone looking to utilize web scraping as part of their data strategy.

Selecting the Right Tools for Web Scraping

Selecting the appropriate web scraping tools is pivotal for achieving successful data extraction. Various software options are available, each catering to specific needs ranging from simple data gathering to complex scraping workflows. Tools such as Beautiful Soup for Python provide powerful HTML parsing capabilities, while platforms like Scrapy offer comprehensive frameworks for larger scraping projects.

Additionally, cloud-based scraping services simplify the scraping process by eliminating the hassle of server management and maintenance. Users can access scalable solutions that adapt to their scraping requirements, making it easier to gather data from multiple sources simultaneously. Evaluating tool features, ease of use, and support options is essential for effective tool selection.

Future Trends in Data Extraction and Scraping

The future of data extraction and scraping looks promising, driven by advancements in AI and machine learning. These technologies have the potential to enhance the accuracy and efficiency of web scraping by allowing tools to learn from data patterns and adapt to changes in website structures automatically. Natural Language Processing (NLP) can further help in understanding and extracting contextually relevant information from diverse content types.

Moreover, increased regulatory focus on data privacy and protection will likely shape the future landscape of web scraping. Organizations must stay informed about compliance requirements and adapt their scraping practices to align with evolving legal frameworks. Balancing efficient data retrieval practices with ethical considerations will become increasingly important for sustainable web scraping in the future.

Frequently Asked Questions

What is site scraping and how does it work?

Site scraping, also known as web scraping, is the process of extracting data from websites using automated tools or scripts. These tools navigate to specified web pages, retrieve the HTML content, and parse it to extract relevant information, making data extraction efficient and scalable.

Are there legal considerations to keep in mind while performing site scraping?

Yes, when conducting site scraping, it’s important to respect a website’s terms of service and comply with legal regulations regarding data use and privacy. Always check if the site allows automated scraping and look for specific permissions or API availability to avoid potential legal issues.

What tools are commonly used for automated scraping?

There are several content scraping tools available for automated scraping, including popular options like Beautiful Soup, Scrapy, and Puppeteer. These tools facilitate HTML parsing and simplify the extraction of structured data from web pages.

How can I perform HTML parsing for effective data extraction?

HTML parsing for data extraction can be efficiently done using libraries like Beautiful Soup in Python. This library allows you to navigate the HTML tree structure easily, enabling you to extract information accurately from targeted HTML elements.

What are the benefits of using automated scraping for data extraction?

Automated scraping offers numerous benefits, including speed, efficiency, and the ability to collect large volumes of data quickly. It reduces the manual labor associated with data entry and enables real-time data updates from various sources across the internet.

Can I scrape content from any website?

Not necessarily. While many sites provide publicly accessible data, some have restrictions on scraping. Always ensure you review a site’s robots.txt file and terms of service to determine whether scraping their content is permissible.

How does web scraping differ from data mining?

Web scraping is a specific technique of data extraction from websites, while data mining encompasses a broader range of techniques used to analyze and extract patterns or knowledge from large datasets. Web scraping can serve as the initial step in the data mining process.

What are some best practices for ethical web scraping?

Best practices for ethical web scraping include adhering to the website’s terms of service, using rate limiting to avoid overloading servers, respecting robots.txt directives, and obtaining permission when necessary. This ensures responsible scraping and avoids potential legal issues.

Is it possible to scrape dynamic websites?

Yes, scraping dynamic websites is possible by utilizing tools that can render JavaScript, such as Puppeteer or Selenium. These tools allow you to interact with web pages as a user would, enabling the extraction of content that loads dynamically.

What types of data can be extracted using content scraping tools?

Content scraping tools can extract a wide variety of data types, including text, images, links, product details, and pricing information. This data can be used for research, analysis, and database enrichment across various industries.

| Key Point | Description |

|---|---|

| Site Scraping Limitations | Unable to scrape content or access external websites directly, including nytimes.com. |

| Content Provision | Users must provide HTML content or specific text to enable assistance. |

| Analysis Support | Willing to analyze or summarize provided content by users. |

Summary

Site scraping can be a valuable tool for gathering information, but it comes with significant limitations, as evidenced by the inability to access external websites like nytimes.com. To obtain accurate analysis or summaries, users are encouraged to provide the specific content they wish to discuss. By facilitating this collaboration, effective insights can be gained without breaching site scraping protocols.